You can verify the result using the numpy.allclose() function. The NumPy code is as follows.Ī = np.array(,, ])Įxecuting the above script, we get the matrix We will use NumPy's () function to find its inverse.

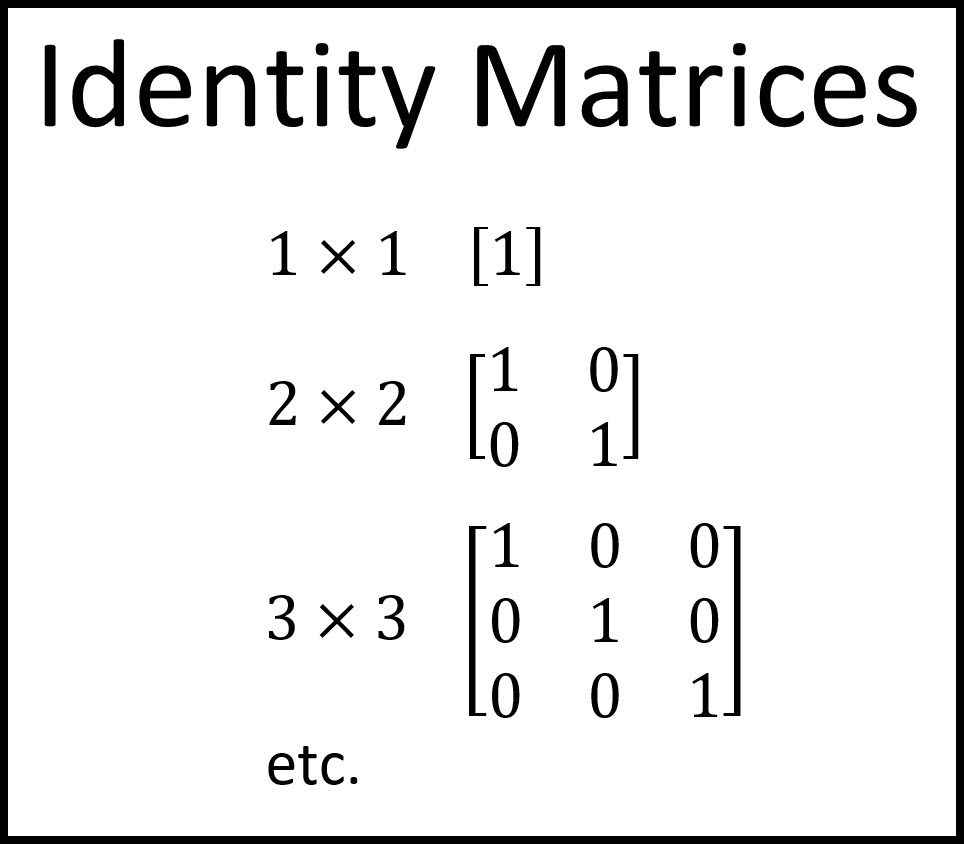

Now we pick an example matrix from a Schaum's Outline Series book Theory and Problems of Matrices by Frank Aryes, Jr 1. As such, my intentions are purely to present an intuitive discussion.In Linear Algebra, an identity matrix (or unit matrix) of size $n$ is an $n \times n$ square matrix with $1$'s along the main diagonal and $0$'s elsewhere.Īn identity matrix of size $n$ is denoted by $I_$.Īn inverse of a matrix is also known as a reciprocal matrix. Practitioners more experienced than I would surely find flaws in it. Note: The above discussion is by no means mathematically rigorous. Hope this gives an intuitive understanding of the relation between the determinant and the inverse. Thus, we can see why for a singular matrix it's determinant is zero, and there exists no inverse. If we were to recover the original point from the transformed point, we wouldn't know which of the two points to recover to. Think of $A$ as a function that maps two different points to the same point.

Then the inverse matrix $M^$, we lose information along at least one of the dimensions. Given a basis of $V$ and a basis of $W$, there is a corresponding matrix $M$ representing the linear map $M$. Suppose $M : V \to W$ is an invertible linear map. To conclude, since bijectivity is equivalent to invertibility, a linear map is invertible if and only if its matrix has non-zero determinant.ĮDIT: To answer your question about what is the inverse matrix. This is more-or-less the definition of the matrix of a linear map.įollowing (1) $\iff$ (2) applied to basis vectors $\iff$ (3) and (3.5), you get the equivalence between bijectivity of a linear map and its matrix having non-zero determinant. (3.5) The column vectors of a matrix representing a linear map are the images of the basis vectors of the domain under that map. So non-zero determinant $\iff$ column vectors span a parallelopiped of non-zero volume. (3) The determinant of a square matrix measures the (hyper) volume of the parallelopiped spanned by the column vectors in the matrix. You can reassure yourself by imagining this in 3 dimensions. (2) A set of $n$-vectors sitting in $n$-dimensional space are linearly independent $\iff$ the volume of the parallelopiped they span is non-zero. (1) A linear map is injective $\iff$ the images of basis vectors of the domain are linearly independent. We aim to show that a linear map is injectve if and only if its matrix has non-zero determinant. Then injectivity alone is sufficient for bijectivity of a linear map. To make things simpler, we will only consider linear maps between spaces of equal finite dimension (corresponding to considering only square matrices). It just happens so that when you translate this into linear algebra for linear maps, you get the determinant of a matrix is non-zero. A function is invertible if and only if it is surjective and injective (i.e. Since matrices are simply representations of linear maps with respect to a basis of the domain and codomain, the question of whether a matrix is invertible is essentially the same as whether a function from a set to another set is invertible or not (the function being the linear map).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed